In San Salvador, a government app screens you for diabetes and hypertension, then sends you to a private lab to pay for your own blood work. In Medellín, a community health worker named Luz Marina Agudelo has been walking the same hillside route since 2011, checking blood pressure with a manual cuff, adjusting medications on the spot, referring people to a public clinic three blocks downhill. One of these is called innovation. The other is called underfunded.

El Salvador’s president announced in December 2023 that Google’s AI will monitor the country’s chronic patients. The app identifies who is at risk and directs them toward private laboratories, specialist consultations, AI-generated diagnoses. Le Monde, a major French newspaper, reported the basics. What nobody in that coverage asked is the question that would occur to anyone who has ever managed a chronic condition on a budget: what happens after the screen?

I know what happens after the screen. I manage a spinal cord injury, which affects how my body controls several systems. This means I also manage pressure sores, urinary tract infections, bone density loss, and a cardiovascular system that does not regulate itself the way yours does. I have been screened, flagged, monitored, and referred more times than I can count. In December 2022, a clinic in South London told me my bone density scan showed early osteoporosis, a condition where bones become progressively weaker. They referred me to a specialist. The specialist had a four-month wait. The scan cost nothing. The follow-up cost time I did not have and transport I could not easily arrange. The gap between the flag and the care was four months wide and nobody was responsible for it.

That gap is the product El Salvador just bought.

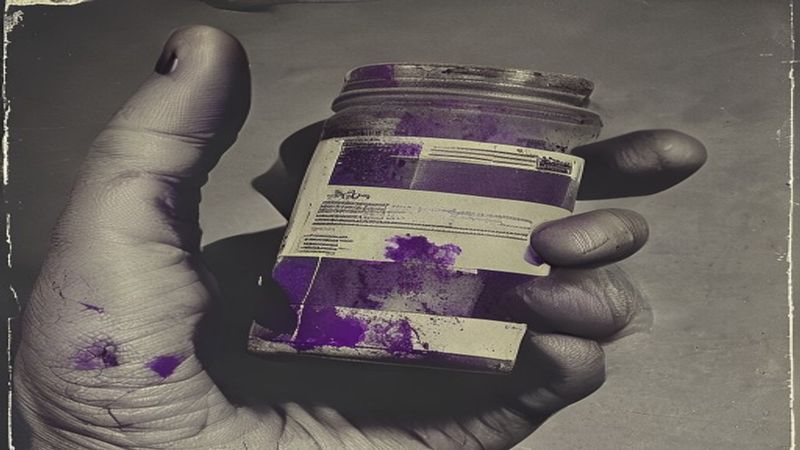

Here is what the app does. It collects symptoms. It runs them against a model trained on data from populations that may or may not resemble the people using it. It produces a risk score. It tells you to go somewhere and pay someone. The monitoring is real. The care is a referral to a market.

Sunaura Taylor, a disability scholar, introduced the argument that what counts as a “normal” body determines who gets resources and who gets managed. This AI screens for illness then directs you to buy treatment. That is not health management. It is sorting you. The sort has two outcomes: you can pay, or you cannot. If you cannot, the system has still captured your data. You are now a known diabetic in a Google database. The screening was free. You were the price.

I want to be fair. Genuinely. El Salvador’s public health system is stretched past breaking. Chronic illness is rising across Central America. The impulse to use technology to reach people who cannot reach a clinic is not stupid. It is not cynical, at least not at the point of origin. A minister sitting in a room with a Google sales team, watching a clean slide deck about AI-powered population health, is seeing a real problem and a solution that looks like it scales. I understand the appeal. I have sat in rooms like that. In March 2020, a transport consultancy showed me a simulation of wheelchair-accessible route planning powered by machine learning. Beautiful interface. I asked where the data on broken pavement came from. Silence. The model assumed the city matched its own map.

The mechanism is the same. The map is not the territory. The screen is not the care. Anna Hamilton wrote about this split in her essay “Brain Drain: Chronic Illness as Disability,” explaining precisely the way chronic illness gets counted as a medical event rather than a daily negotiation, a thing you live inside rather than a thing that happens to you at appointments. An app that flags you once and refers you out has mistaken the appointment for the condition.

Medellín’s community health worker programme is not perfect. Agudelo told a Colombian radio interviewer in 2023 that she sometimes cannot get the medications she needs to distribute. Supply chains break. Funding fluctuates. But she knows which of her patients lives on the fourth floor without a lift. She knows who cannot read the label on a pill bottle. She knows whose husband will not let them leave the house alone to visit a clinic. The AI knows none of this. The AI does not need to know, because the AI’s job ends at the referral. Everything after the referral is your problem.

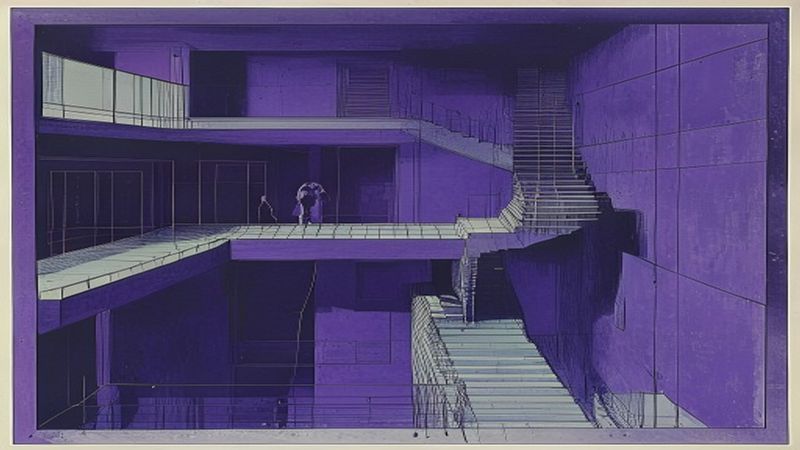

Lefebvre argued that space is produced by the people who use it, not the people who design it. Healthcare works the same way. A system designed from above — clean, scalable, AI-powered — produces a space that looks like coverage. From below, from inside a body that needs continuity, that needs someone to remember what happened last time, that needs a human being to notice that you have not shown up in two weeks, it produces nothing. It produces a data point where a relationship should be.

I think about procurement a lot. I think about what it costs to pay a community health worker a salary versus what it costs to license an AI platform from one of the world’s largest corporations. I think about who holds the data when the contract ends. I think about a government that imprisoned tens of thousands of people without trial now building a biometric health database. I think about how “monitoring” is a word that means two completely different things depending on which side of the screen you are on.

Luz Marina Agudelo climbs the same hill every Tuesday, cuff in her bag, and the hill has not gotten any flatter.

This article was prompted by El Salvador’s president entrusts monitoring of chronic patients to Google’s AI from Le Monde English.