A ride-hailing platform in Jakarta deactivated a driver this month for what it called “inconsistent availability patterns.” She had lupus. The system read her flares as unreliability. I know this because a colleague in information design sent me the screenshot of her account status page — green, green, green, red, red, deactivated — and asked me what I saw. I saw a heatmap that punishes deviation from a straight line.

The Conversation, a digital news outlet, published a piece this week about how AI-driven gig platforms worsen the burden on Indonesia’s female workers. The analysis is sharp. Menstruation, pregnancy, caregiving — these disrupt the rhythm the algorithm expects, and the algorithm disciplines the disruption. The article names this as a gendered injustice. It is. But the frame stops one step too early. The algorithm does not punish femininity. It punishes any body that cannot perform consistency. Gender is one axis. The whole architecture is built on a deeper fiction: that a reliable worker is a predictable one.

I design information systems. I have spent years looking at dashboards, status screens, scheduling grids. The thing that structures all of them is a baseline — a model of what “normal” output looks like, rendered as a line, a colour, a threshold. In March 2023, I consulted on a logistics platform — a system for managing delivery and transportation work — in Rotterdam. The scheduling interface was elegant, with a clean grid where each worker was represented as a row, using green cells for completed shifts, amber for partial shifts, and red for missed shifts. A project manager told me: “The system sees patterns before we do.” He meant this as a compliment. I asked what a pattern meant. He said: “Three ambers in a row, the system flags you.” I asked who reviews the flags. He said nobody reviews them. The flags feed a scoring model. The model decides who gets offered the next shift.

Nobody built that system to exclude anyone. That is the point. The designer imagined a body. The body was consistent. The body showed up at the same time, worked the same hours, maintained the same pace. The system was optimised for that body. Every other body is noise.

Vilém Flusser, a media theorist, wrote in 1983 that technical images do not represent the world — they project a model of it and then replace the world with the model. He was talking about photography. He could have been talking about a shift-scheduling dashboard. The green row is not a record of a worker. It is an image of the worker the platform wants. The real worker, the one with lupus flares or fatigue cycles or a body that runs on a rhythm the system has no field for, gets measured against the image. The image wins every time.

You might say: but this is just optimisation. Platforms need to predict supply. Fair. Prediction is not neutral, though. Every prediction model contains a silhouette of the body it expects. Otto Neurath, a statistician and social theorist, understood this in the 1930s when he built the Isotype system — pictorial statistics meant to make social data clear and understandable to anyone. His partner Marie Neurath later admitted that deciding which figures to include and which to leave out was the entire political act. The figure standing in for “worker” was always upright, always symmetrical. The figures that didn’t fit the template weren’t distorted. They were simply absent.

The gig platform does the same thing at scale. It doesn’t reject you. It just stops showing you shifts. The interface looks identical whether you are deactivated or simply unseen. I have stared at enough admin panels to know: the visual language of exclusion is silence. No red banner. No error message. The row just goes blank.

In January 2025, I sat in a design review for a delivery platform’s driver-facing app. Someone had proposed adding a “flexibility score” — a metric that would reward drivers who accepted last-minute shifts. I asked what happened to drivers whose conditions made last-minute shifts impossible. The room went quiet for exactly the kind of silence I recognise from other rooms. Then someone said: “That’s an edge case.” I wrote it down. I wrote it down because I have been called an edge case in four countries and two languages, and the phrase is always delivered in the same tone — sympathetic, final.

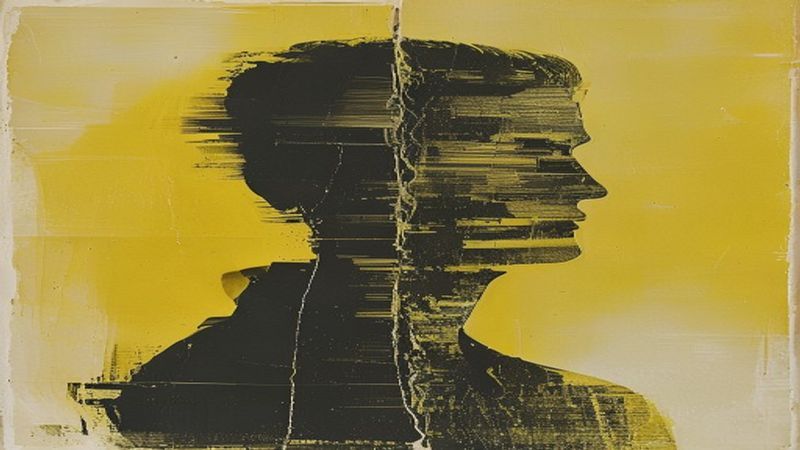

The problem is not that the algorithm discriminates. The problem is that the algorithm has a body in mind and that body is not disclosed. No platform publishes the model worker. No interface shows you the silhouette you are being compared against. The scoring is legible — green, amber, red — but the standard behind the scoring is invisible. I design visual systems. The thing I know in my hands is that the most powerful information is the information a system chooses not to display.

Astra Taylor, writing about automation’s hidden labour costs, put it plainly: “The promise of the future is used to justify the exploitation of the present.” That sentence was about technology broadly, but it describes every gig platform scheduling screen I have ever seen. The screen says: here is your opportunity. The screen does not say: here is the body we designed the opportunity for.

The driver in Jakarta saw green, green, green, red, red, then nothing — which is also a kind of heatmap, if you read it as a picture of a body the system could not hold, rendered in the only language the system knows, which is colour, which is silence.

This article was prompted by Algorithms don’t care: how AI worsens the double burden for Indonesia’s female gig workers from The Conversation.