Every notification you’ve ever received was built on the assumption that you can hear it. The triple-chime of a Slack message, the ascending tones of an iPhone alarm, the synthetic doorbell of a Ring camera — these are not neutral design choices. They are hearing-centric defaults masquerading as universal solutions, and they have trained an entire industry to treat sound as the primary channel of urgency. I design interfaces for a living, and I am deaf, and I am here to tell you that the most information-rich sensory channel in digital design is the one your industry has almost entirely neglected: vibration. Not vibration as a fallback. Not vibration as a pale substitute tacked onto an audio alert. Vibration as a primary, sophisticated, deeply expressive medium of communication — one that hearing designers cannot fully conceptualize because they have never had to build a life inside it.

The haptic ghetto

When I say vibration, most designers picture the single crude buzz of a phone on a table. That buzz is to haptic communication what a foghorn is to spoken language: one blunt signal in a universe of possible articulations. The reason your phone’s vibration vocabulary is so impoverished has nothing to do with hardware limitations. Apple describes its Taptic Engine as a precise linear actuator, and it is capable of producing textures, rhythms, pressures, and durations that could rival a small percussion ensemble. Android devices increasingly ship with similarly capable motors. The hardware is there. What is missing is a design culture that takes vibration seriously as a language rather than treating it as the accessibility annex — the sad little room off the main hall where deaf and hard-of-hearing users are expected to be grateful for whatever scraps of information make it through. I call this the haptic ghetto: the space in interaction design where tactile feedback is permanently subordinated to audio, given fewer resources, less iteration, less grammar. It persists not because of technical constraints but because of an ableist hierarchy of the senses in which hearing is treated as cognitively superior, more nuanced, more worthy of design investment. That hierarchy is wrong, and it is costing everyone — not just deaf users — a massive amount of information.

Consider the watch on your wrist. Apple Watch offers a set of distinct haptic patterns, including a tap for notifications, a double-tap for certain alerts, and a sustained pulse for timers. Compare this with the hundreds of distinct notification sounds available in iOS. The asymmetry is staggering, and it reveals the real design priority. Sound gets a lexicon. Vibration gets a grunt. Christine Sun Kim, the deaf artist whose work — in her performances and public talks — relentlessly interrogates the social ownership of sound, has explored how hearing people treat sound as “their property” — something they control, define, and gatekeep. Haptic design in tech is a direct extension of that gatekeeping. The people who design these systems hear. They test these systems by listening. They evaluate information hierarchy through audio fidelity. Vibration is what they add at the end, if the accessibility checklist demands it, and they give it the design equivalent of a shrug.

What my body already knows

I have lived inside vibration my entire life. When I was a child, I learned to place my hand on the family dog’s ribcage to feel him growl before I could see his teeth. I navigate city streets by registering the subsonic tremor of truck engines through pavement. I feel the structural bass of a building’s HVAC system shift when someone opens a door three rooms away. This is not a superpower. This is not “overcoming.” This is what happens when you live in a sensory world that the dominant culture considers secondary: you develop granularity. You build a haptic vocabulary that hearing people never need and therefore never acquire, and then those same people design your devices and give you a single buzz for everything from “your mother is calling” to “your house is on fire.”

Deaf and deafblind communities have always known that vibration carries complex information. As Haben Girma has described in her writing, tactile communication between DeafBlind people involves pressure, speed, location on the body, and rhythm — a full grammar transmitted through touch. The Protactile movement, developed by DeafBlind scholars and community members like Jelica Nuccio and aj granda, as documented in the Protactile Language Emergence Project, has formalized an entire linguistic system based on touch and vibration, one that operates with the sophistication of any spoken or signed language. These are not workarounds. These are mature communication systems that predate and outperform anything the tech industry has attempted with haptics. And yet when Apple or Google designs a new haptic vocabulary, they do not consult Protactile linguists. They do not hire deaf interaction designers to lead the work. They hand the project to audio designers who have been asked to “also think about vibration,” as if that is the same thing as knowing what vibration can do when it is your primary channel.

DeafBlind tactile communication in practice — the sophisticated grammar of Protactile language that the tech industry has not yet consulted in haptic design

DeafBlind tactile communication in practice — the sophisticated grammar of Protactile language that the tech industry has not yet consulted in haptic design

The architecture of a missed language

What would it look like to design haptics with the same richness we currently reserve for sound? I have spent years sketching this out. A notification system built on haptic grammar would use at least five distinct parameters: rhythm (the temporal pattern of pulses), intensity (how hard), duration (how long each pulse sustains), texture (sharp versus smooth attack, which the Taptic Engine can produce), and location (which part of the device or wearable produces the vibration). Combine these and you get a design space with hundreds of distinguishable signals — more than enough to differentiate between a text from your partner, a calendar reminder, a severe weather alert, and a breaking news notification, all without looking at a screen or hearing a sound. This is not speculative design fiction. This is engineering that could ship in a software update if anyone with decision-making power understood the sensory channel well enough to prioritize it.

As designer Liz Jackson wrote, the “disability dongle” — the well-meaning but useless product designed by nondisabled people to solve problems disabled people don’t actually have — is a persistent failure mode in accessible design, and the current state of haptic design fits that pattern precisely. It is dongles all the way down. Products like “vibrating alarm clocks for deaf people” that offer a single brutal motor set to maximum because the designers assumed that if you can’t hear, what you need is more force. The entire framing reveals the poverty of imagination: vibration as loudness substitute rather than vibration as information architecture. What I want is not a louder buzz. What I want is a haptic system that communicates as much as a ringtone does for hearing users — identity, urgency, category, source — all through the skin, and designed by people who live in that channel, not people visiting it out of compliance obligation.

The body as interface

Disability justice, as articulated in Disability Justice — A Working Draft by Mia Mingus and in the work of Sins Invalid, and as advanced by Patty Berne and Leah Lakshmi Piepzna-Samarasinha, insists on the leadership of those most impacted. In haptic design, those most impacted are deaf, DeafBlind, and deafblind communities who have already done the foundational work of understanding touch as language. The knowledge exists. The expertise exists. What does not exist is the institutional willingness to treat that expertise as design leadership rather than “user research” — the difference between being the person who defines the product and being the person who reacts to a prototype someone else already built. I have sat in both chairs. The second chair is where deaf designers are almost always placed, brought in late to validate decisions that were made without us, nodding politely at haptic patterns that communicate nothing because they were designed by people who experience vibration as noise rather than signal.

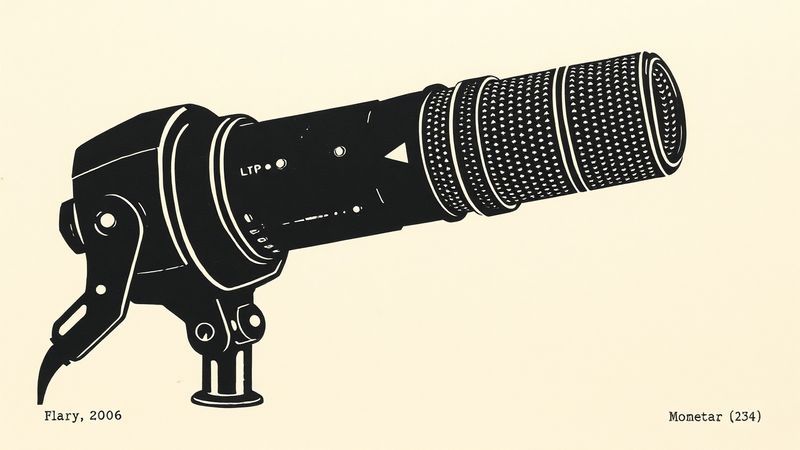

Haptic design as primary language — vibration patterns mapped as a full sensory grammar rather than an accessibility fallback

Haptic design as primary language — vibration patterns mapped as a full sensory grammar rather than an accessibility fallback

The hearing world has spent decades refining the semiotics of digital sound: the Intel chime, the Netflix ta-dum, the Mac startup chord. These are sonic logos designed with obsessive attention to emotional resonance, memorability, and meaning. No equivalent craft exists for vibration, and that absence is not an oversight. It is a statement about whose perception counts. Every time a designer says “we added haptic feedback” and means a single formless buzz, they are telling me that my sensory world does not merit the same creative investment as theirs.

What vibration holds

The most interesting design problems are never about adding accessibility to an existing paradigm. They are about recognizing that the paradigm was incomplete from the start — that it was built on a sensory monoculture, and that the people it excluded were not deficient but differently fluent. Vibration is not sound’s understudy waiting in the wings. It is a parallel language with its own syntax, its own poetics, its own capacity for beauty and precision, already spoken fluently by communities that the tech industry has not yet learned to listen to — if listening is even the right word, which, for once, it is not.